Every organisation is focused on doing more with their data. But how do you gather this information and make informed decisions using this data? The answer involves a number of different stages, but one of the key solutions is to get your data into a cloud data platform like Azure to make it easy to analyse and accessible.

A key technology for any organisation to connect to their data and move it into the cloud is Azure Data Factory.

What is Azure Data Factory?

Azure Data Factory is Microsoft Azures, data pipeline solution or sometimes called extract-transform-load (ETL), extract-load-transform (ELT), and data integration. Azure Data Factory focuses on connecting to data sources, getting data into the cloud and in the right state for analysis.

Azure Data Factory does not store any data itself, so it is normally paired with a Data Warehouse or Data Lake, where you store the data and run your analytics from.

Data Pipeline

Creating a data pipeline is one of the most important often overlooked challenges facing data professionals. Ensuring you have a flow of live, clean and relevant data into the cloud enables a wide range of analysis and AI abilities.

A data pipeline is a logical grouping of activities that performs a unit of work. Together, the activities in a pipeline perform a task. For example, a pipeline can contain a group of activities that ingests data from an on-premise SQL database, then runs a query to clean the data and then pushes this data into a data warehouse.

The benefit of this is that the pipeline allows you to manage the activities as a set instead of managing each one individually. The activities in a pipeline can be chained together to operate sequentially, or they can operate independently in parallel.

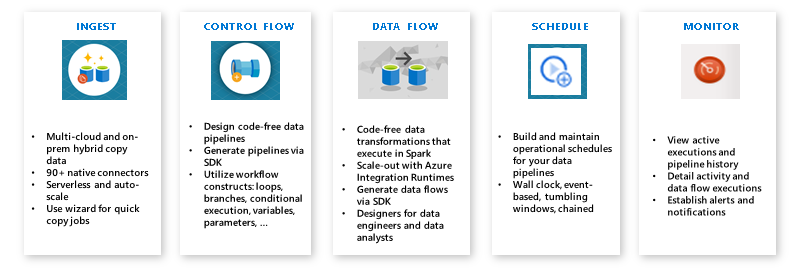

What does Azure Data Factory do?

The Data Factory service is used to create data pipelines that move and transform data and then run the pipelines on a schedule that creates an automated flow of data in your organisation.

Data Factory activity is broken down into 3 main activities:

- Connect and Collect – The most important activity Azure Data Factory does is to provide an easy way for you to connect to your data. Data is normally siloed throughout your organisation and in a range of different systems. Data Factory can connect to a wide range of data sources including SaaS services, file shares, on-premise databases and more. Azure Data Factory will then extract data from these sources on a schedule set by you and copy this data into the cloud.

- Transform and Enrich – Once data is present in the cloud, it is possible to run some simple analytics on it to combine data from multiple sources, clean bad data and create new calculated fields.

- Publish – Azure Data Factory can then publish this data to your chosen Azure service including Azure Data Lake and Azure Synapse (Data Warehouse).

Azure Data Factory is a key to success with your data. It is a key facilitator of one of the core steps in creating a modern data platform, it captures and transforms your data into the cloud. To discover how you could start using Data Factory, contact us today.